World Elder Abuse Awareness Day 2023

PRESIDENT BIDEN RECOGNIZES DAY WITH A PROCLAMATION

PRESIDENT BIDEN RECOGNIZES DAY WITH A PROCLAMATION

World Elder Abuse Awareness Day (WEAAD) was launched on June 15, 2006 by the International Network for the Prevention of Elder Abuse and the World Health Organization at the United Nations. The day serves as a call-to-action for individuals, organizations, and communities to raise awareness about elder abuse, neglect, and exploitation. This year, President Biden signed a proclamation, outlining some of the initiatives his Administration has taken to help older victims of abuse, including the neglect of senior residents in nursing homes. You can read his proclamation here.

* * * * * * * * * *

EVERSAFE WAS AT THE US CAPITOL ON JUNE 15TH

EverSafe was at the US Capitol on June 15th. We were invited by the Securities Industry and Financial Markets Association (SIFMA) to give remarks about HelpVul, a reporting portal for banks, firms, and credit unions to report cases of suspected fraud of at-risk adults to authorities. We also participated in a forum hosted by the Florida Securities Dealers Association focused on how to prevent elder financial exploitation. You can watch that presentation here.

Outreach for Seniors

MOBILE SERVICES – THE NEW FRONTIER

During the COVID-19 pandemic, many cities relied on mobile response teams to provide vaccinations to older adults with transportation challenges. These programs included residents in independent living facilities, assisted living facilities, and nursing homes. The success of these mobile outreach programs has inspired state and local agencies across the country to  replicate and expand these programs to offer a wide array of services in a mobile capacity. Services focused on psychological well-being, medical care, treatment for substance use disorders, home health care, socialization, meals, home repairs, and connections to community-based programs are being considered. In Virginia, a new mobile outreach program that spans five counties focuses on food insecurity and social isolation in older adults. Residents can call the program coordinator in order to be provided with valuable resources, including home-delivered meals. In New York, free in-home therapy is offered to older adults who are experiencing depression or anxiety – at no cost. In Colorado, a Larimer County mobile outreach program is finalizing negotiations to obtain a van that will provide books to homebound individuals who are unable to access local libraries. By delivering necessary services directly to older adults, existing barriers related to travel are reduced for seniors − and for caregivers, as well.

replicate and expand these programs to offer a wide array of services in a mobile capacity. Services focused on psychological well-being, medical care, treatment for substance use disorders, home health care, socialization, meals, home repairs, and connections to community-based programs are being considered. In Virginia, a new mobile outreach program that spans five counties focuses on food insecurity and social isolation in older adults. Residents can call the program coordinator in order to be provided with valuable resources, including home-delivered meals. In New York, free in-home therapy is offered to older adults who are experiencing depression or anxiety – at no cost. In Colorado, a Larimer County mobile outreach program is finalizing negotiations to obtain a van that will provide books to homebound individuals who are unable to access local libraries. By delivering necessary services directly to older adults, existing barriers related to travel are reduced for seniors − and for caregivers, as well.

GOVERNMENT / LEGISLATIVE UPDATE

SENIOR SECURITY ACT

Earlier this year, the House passed a bipartisan bill that will prevent senior financial scams. Entitled the Senior Security Act, the legislation would create a new SEC taskforce to combat fraud targeting seniors. Companion bipartisan legislation has been introduced in the U.S. Senate. The taskforce would:

Earlier this year, the House passed a bipartisan bill that will prevent senior financial scams. Entitled the Senior Security Act, the legislation would create a new SEC taskforce to combat fraud targeting seniors. Companion bipartisan legislation has been introduced in the U.S. Senate. The taskforce would:

- Identify challenges older investors encounter, including problems associated with exploitation and cognitive decline;

- Identify areas in which senior investors would benefit from changes at the Commission or the rules of self-regulatory organizations;

- Coordinate, as appropriate, with other offices within the Commission and other taskforces that may be established within the Commission, self-regulatory organizations, and the Elder Justice Coordinating Council; and

- Consult, as appropriate, with state securities and law enforcement authorities, state insurance regulators, and other federal agencies.

SCAM ALERT

AI BEING USED FOR SCAM CALLS

Unfortunately, scams targeting older adults have become the norm. Fraudsters gain access to their personal information, either by mining social media or purchasing data on the Dark Web, and create scenarios in calls that frighten grandparents. The scammer often impersonates a grandchild or another close relative in a crisis situation, like an arrest, and asks for immediate  financial assistance – for purported bail money. These fraudsters can “spoof” the caller ID to make the incoming call appear to be coming from law enforcement or a law firm.

financial assistance – for purported bail money. These fraudsters can “spoof” the caller ID to make the incoming call appear to be coming from law enforcement or a law firm.

The alarming news is that AI, or artificial intelligence technology, has elevated the grandparent scam to another level. Fraudsters can now “mimic voices, convincing seniors that their loved ones are in distress,” according to a recent Washington Post article. According to the piece, scammers can replicate a voice from just a short audio sample, and then use AI tools to hold a conversation in that voice, which “speaks” whatever the imposter types. Experts warn that the most prominent danger of artificial intelligence is the ability of this tech to blur the line between fact and fiction, and provide criminals with effective and inexpensive tools. Recent phone scams using AI-based voice transcription tools that are readily available online have US authorities concerned. According to Blackbird, “audio transcription via artificial intelligence, which has become almost impossible to distinguish from the human voice, allows ill-intentioned people, such as fraudsters, to obtain information and sums from victims in a more effective manner than they rely on. It usually is.”

Feeling Your Age?

BE LIKE CHARLIE

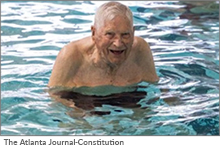

Charlie Duncan, from Atlanta, is 104 years old. A World War II veteran, he drives to an aquatic center three days a week to participate in a water aerobics class. After changing into his

Charlie Duncan, from Atlanta, is 104 years old. A World War II veteran, he drives to an aquatic center three days a week to participate in a water aerobics class. After changing into his  swimsuit, “he parks his walker – with the “I’m Old” license plate – steps into the pool and blends in with the other seniors, some 40 years his junior… The centenarian is a “huge inspiration” to everyone in the water aerobics class, said fellow classmate Angela McInish… If he can be out here three days a week and do this, we can all do this. We don’t have any excuse.” Charlie Duncan’s advice is simple: “If you’ve got it and don’t use it, you’ll lose it’ – and I don’t want to lose it.” He adds: “I try to live right, eat right… I’ve always had a big garden, and I had plenty of fresh vegetables, fruits and nuts. If you eat them, you’ll live healthily.”

swimsuit, “he parks his walker – with the “I’m Old” license plate – steps into the pool and blends in with the other seniors, some 40 years his junior… The centenarian is a “huge inspiration” to everyone in the water aerobics class, said fellow classmate Angela McInish… If he can be out here three days a week and do this, we can all do this. We don’t have any excuse.” Charlie Duncan’s advice is simple: “If you’ve got it and don’t use it, you’ll lose it’ – and I don’t want to lose it.” He adds: “I try to live right, eat right… I’ve always had a big garden, and I had plenty of fresh vegetables, fruits and nuts. If you eat them, you’ll live healthily.”